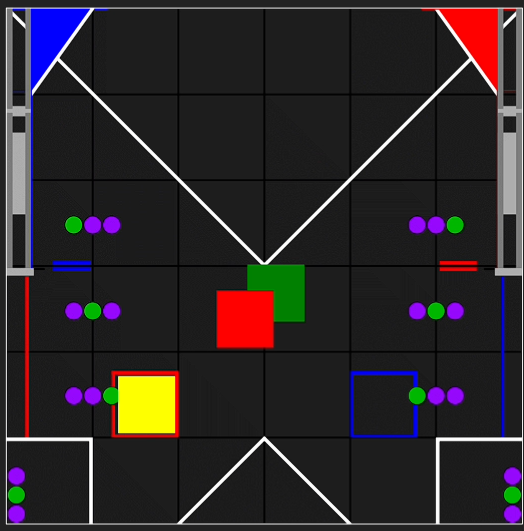

In this season of the FIRST® Tech Challenge (FTC) game, robot were tasked with the deceptively simple task of shooting multicolored balls into a triangular goal as fast as possible. This year, I was the Programming Lead and Co-Founder of my school’s team, coding all of the robot’s functions, particularly the shooter, auto align, and autonomous routines. Through our efforts, our team won the Control Award (which “celebrates a team that uses sensors and software to increase the ROBOT’S functionality during gameplay”) at two of our events, were the event winners at the Semi-Regional Tournament, and placed 3rd at the UIL State Championship!

However, from the start, our team faced time constraints unlike what I had experienced during my previous FRC season (REEFSCAPE); because of latent funding and approval from my school, we started from 0 with only 11 days before our first official competition. As such, we were in a time scramble to build and program the robot. Since the majority of our funding was through the school ($4,070 in total), we decided that hardware iteration would be too costly—meaning that software would have to cover the lost ground. Therefore, I built off of my previous experience and focused on three main aspects to maximize our robot’s capabilities in that time frame: shooter consistency, autonomous alignment, and autonomous routines.

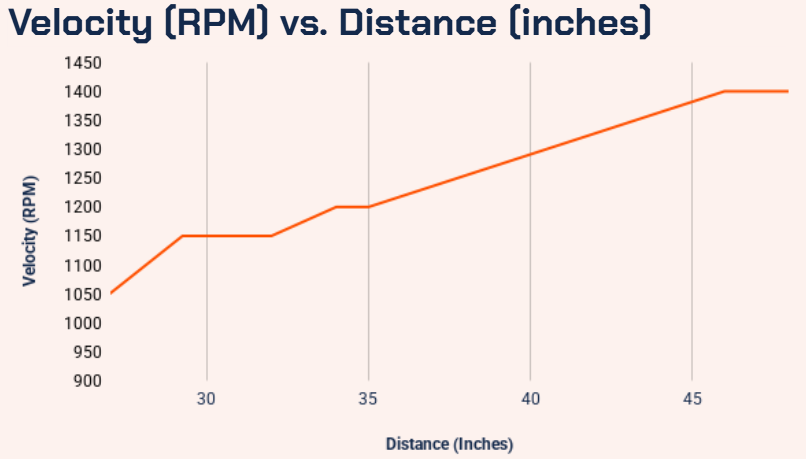

After implementing variable shooting speed using the lookup table, shooting location

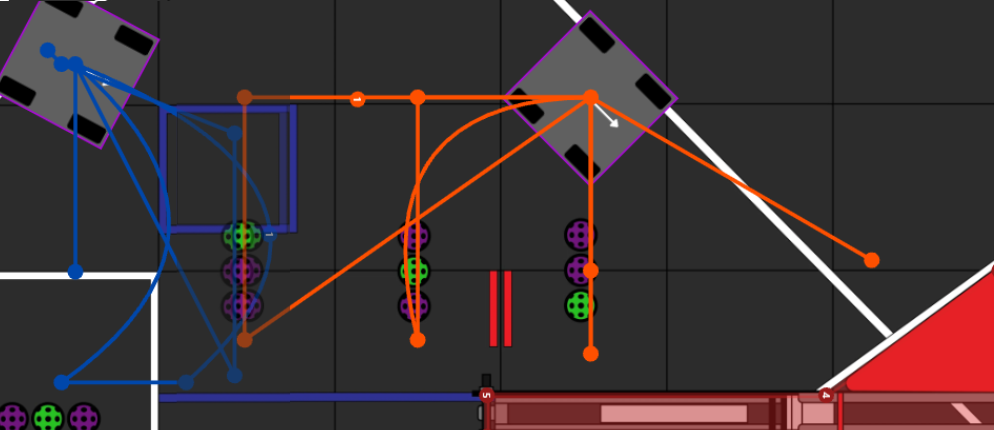

using auto alignment, and pose estimation, I worked on improving our autonomous routine. Originally, I only had time to make a six ball autonomous: one that shot our preloaded balls and then picked up and shot one more. After our first competition, though, I had more time to iterate and improve our autonomous routine. Using PedroPathing, an autonomous pathing software that allowed me to combine point-to-point (p2p) and Bézier curve paths, I was able to create a robust set of autonomous routines and even iterate on them during the competition! By the end of UIL States, we had eight different autonomous routines (four on each side) that we could choose based on our alliance partner, ensuring adaptability that other teams often did not have.

Below are some videos of our different autonomous routines (we are the black robot with the number 33791):